Update February 2022: Millicast has joined the Dolby.io team!

Read the news article for more information, or see how we have integrated at SXSW and NAB.

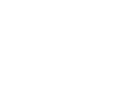

Demuxed 2021 is a wrap! Dolby.io participated in the event as community sponsors, where we were able to engage with a number of members from the video developer online community at an engineer-first event with quality technical talks about video. The event this year was help fully virtual, which allowed a diverse range of speakers across different countries, industries, occupations, and backgrounds to share their stories. Being remote also allowed them to increase on the viewing experience of the talks, with all of them being pre-recorded before hand to ensure consistent quality control, but also closed captioning and American Sign Language interpretation.

All chat was handled on the video-dev Slack group, an already active community of video engineers where they could convene in the general demuxed chat, or break out into more specific topics such as hls.js, or even our own Dolby channel. Q&A was done by threads, and highlights popped out in the stream for all to see!

Here are a few talks that we enjoyed and suggest learning more about!

WebRTC

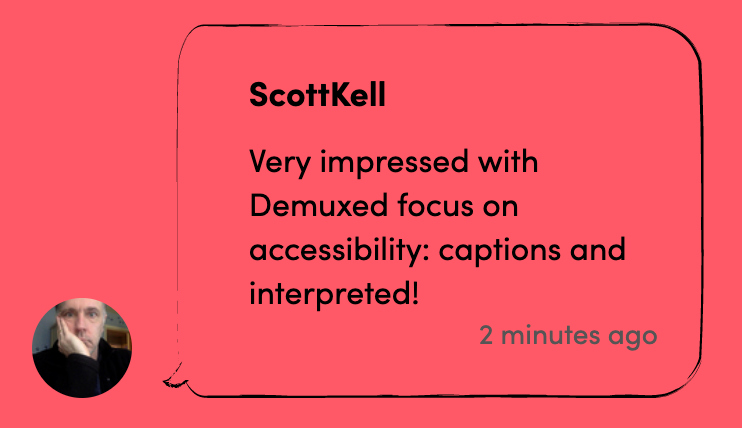

Ryan Jespersen started off the conference with a talk on WebRTC-HTTP ingestion protocol (WHIP). This was created as an answer to the issue of a lack of standard signaling protocol for the streaming industry to use for ingesting media into a service with WebRTC. Jespersen pointed to examples like Google Stadia as illustrations of smarter WebRTC handling, and showed off a fork of OBS Studio built to leverage the same WebRTC implementation that most popular browsers use. Read more on their article used for publishing with the IETF.

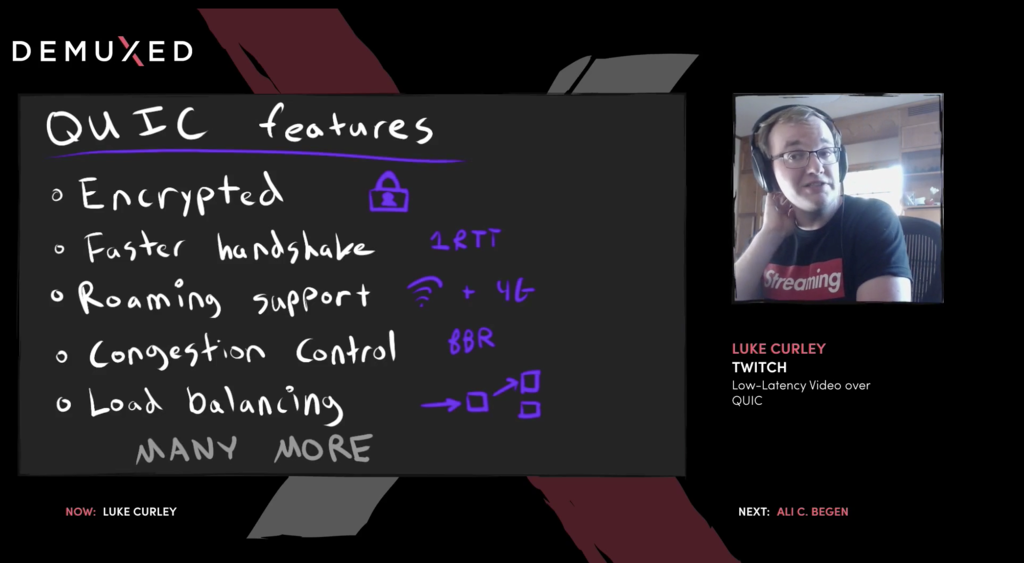

Luke Curley from Twitch declared that TCP simply wasn’t cutting it for WebRTC based streaming, touting QUIC as the future to improve overall experience. Built into HTTP/3, QUIC is a new multiplexed transport built on top of UDP designed to improve streaming by providing features like multiple shared streams that are independent and can be closed individually while sharing a single connection, and switching between wifi and cellular networks with no interruption. Twitch Warp and Facebook RUSH are two examples of companies trying to create solutions using QUIC. Join the Media over QUIC mailing list for more information.

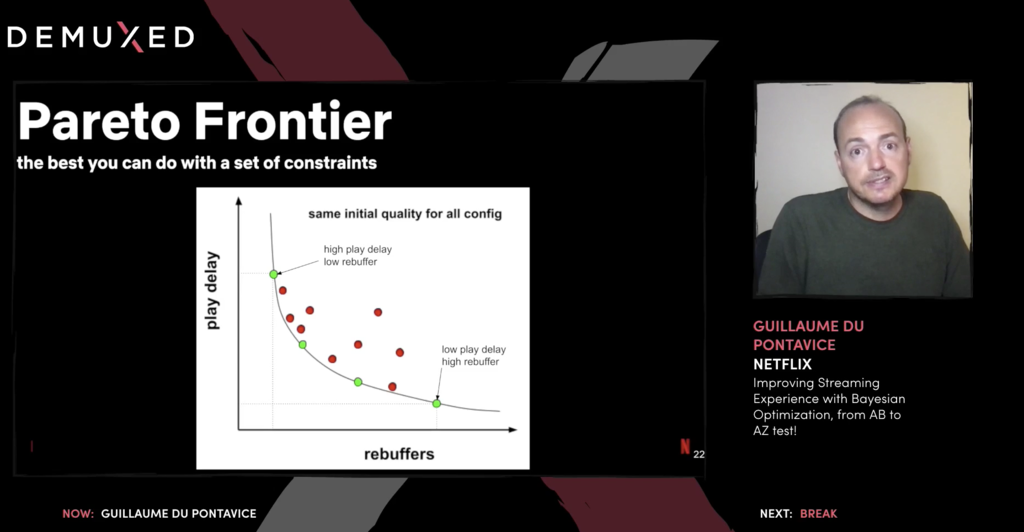

Guillaume Du Pontavice from Netflix explored how we can improve the streaming experience with Bayesian optimization. The goal for all video streaming is to have high quality, low delay, and zero buffering, however getting all three is only possible in the most ideal of circumstances. Adaptive bitrate streaming helps, but doesn’t solve the underlying issues, so instead he decided to look at which levels of compromise were most optimal for each of the three parameters. Using A/B testing, he determined a set of best options given these constraints for real world enjoyment, creating a curve of ideal ratios between play delay and rebuffer (as quality is king).

Content Presentation

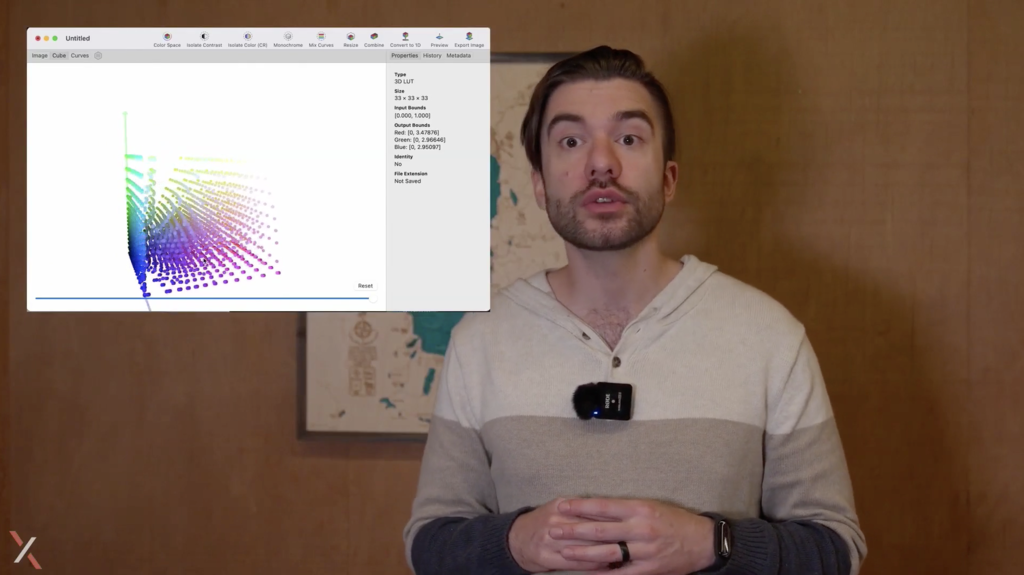

Steve Robertson from Youtube spoke about creating more unified HDR experiences what look good on SDR devices. He used a lot of live examples, recording much of the talk in nature, to demonstrate how easy it was to over or under-saturate an image without any predefined limits to the point where HDR content will look worse than SDR content. Creating better defaults for HDR content with a to make HDR predictable with only the open content provided is an ideal goal that we are already beginning to get in products like DaVinci Resolve and LumaFusion.

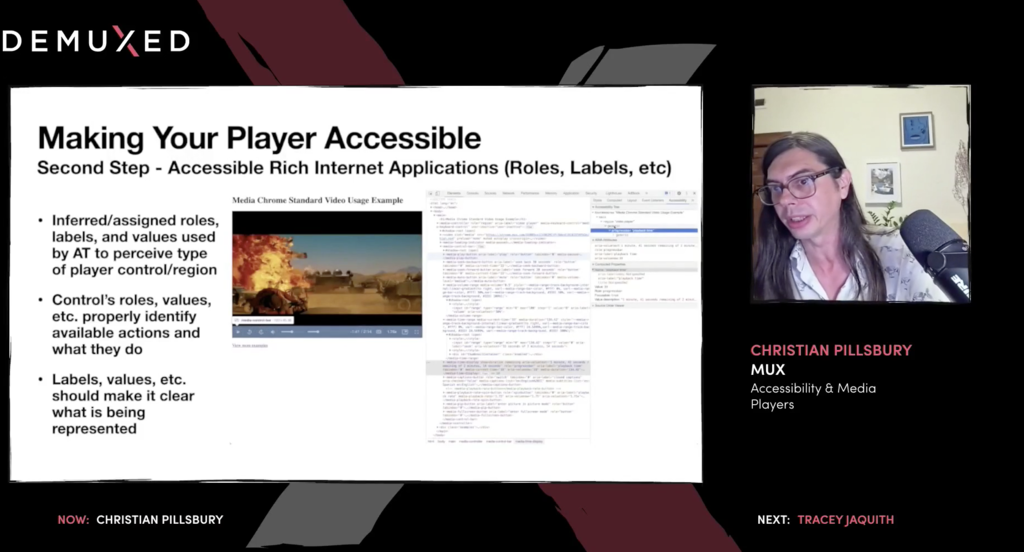

Christian Pillsbury from Mux articulated how important accessibility (a11y) consideration is when designing media players. We need to think of those both with vision impairments as well as mobility impairments in terms of design for the players we make. Ensuring keyboard interaction is a very easy, but often overlooked tool many use to navigate UIs, and can be used not only with actual keyboards, but with other tools, such as mouth sticks, head wands, and more. Taking advantage of the guidelines provided by Accessible Rich Internet Applications (ARIA) is key to ensure maximum compatibility with tools like screen readers and speech recognition while using a video player. A few extra lines of code can make or break the experience for somebody who uses other methods of content consumption.

In conclusion, Demuxed this year was a testament to how remote conferences can thrive with proper attention to preparation and leveraging a passionate community. The video developer community is thriving in this time where quality video over the internet has never been more important. Check out how Dolby.io might be a solution for your video streaming needs today!

Demuxed 2020 Highlights

The Video-Dev Community

Demuxed is the community for engineers working with video. If you want to learn from experts or contribute your own knowledge, the Slack group offers active and thriving discussions on a variety of video related subjects.

Some of our favorite channels in the Slack Community include #audio, #dolby, and #webrtc. You can learn more about community events & meetups from the demuxed.com website.

Questions Answered

The talks were pre-recorded but each speaker made themselves available to answer any questions about the talks. During the breaks, we also invited a few of our own audio experts into the Slack group to answer the questions folks had about audio.

- Paul Boustead, Chief Architect of Dolby Communications group, has spoken on topics such as Improving Intelligibility with Spatial Audio. He joined to answer questions about real-time audio communications and WebRTC.

- Nick Engel, Sr. Director of Media API R&D, recently spoke at an AES community event on the history of noise suppression. He made him self available to discuss noise reduction technology with a modern take on content creation.

- Sripal Mehta, Head of Media APIs for Dolby.io, recently spoke at the AES Show Product Development Symposium on Delivering Scalable AI-based Processing. He wrapped up the final day with some Q&A to focus video-tech on producing high quality audio.