I am thrilled to share the latest version of Dolby.io’s groundbreaking Real-time Streaming (RTS) Plugin and SDK for Unity, designed to revolutionize the way real-time audio is experienced in games. With this powerful plugin, game developers can now create immersive soundscapes from streaming audio and video sources that enhance gameplay and elevate the player’s overall experience.

The Real-time Streaming Plugin for Unity supports both Stereo and 5.1 (6 Ch) audio from an incoming media stream, providing the flexibility to deliver high-quality audio in various formats. We understand that developers need efficient solutions that streamline their workflow. That’s why we have incorporated multiple paths for easy implementation. Our auto mode simplifies the process by automatically generating virtual speakers and layouts for stereo and 5.1 audio setups. For those seeking more control, our Virtual Speaker prefab allows quick and convenient management of arrays of virtual audio sources.

One of the standout features of the Virtual Speaker prefab is its ability to “layout” the stereo or 5.1 mix in a traditional pattern and attach it to a game-engine object’s 3D location. By simply dragging and adjusting the prefab, developers can customize the speaker layout to their specific requirements.

Visualizing the sound emission pattern and falloff is made effortless through the included gizmo, offering a seamless experience during the development process.

Rethinking Game-Engine Audio from a Streaming Perspective

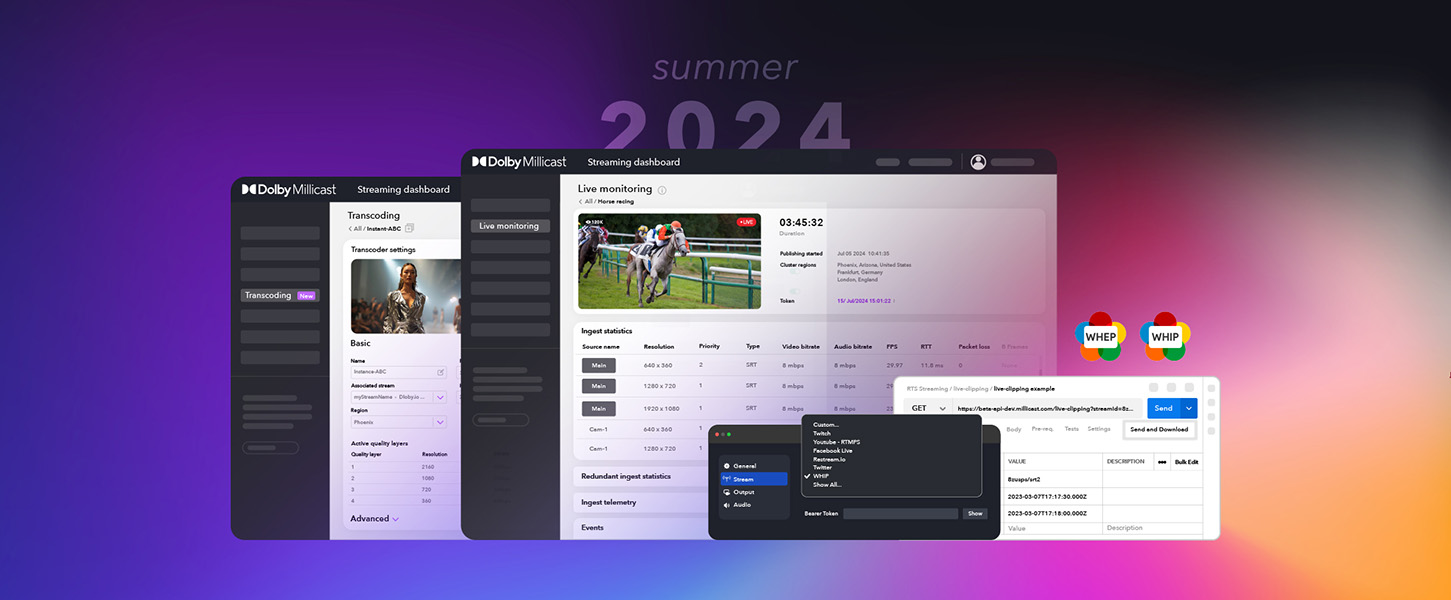

Building in-game-engine experiences with audio media sources from low-latency streams opens up a new opportunity and level of interactivity to experiences. Dolby.io’s audio and video streams can be from live and dynamic sources broadcast from anywhere in the world. Media can be published from a huge variety of capture sources and ingestion methods including OBS, WHIP/WHEP, SRT, and popular media encoders are natively supported by the Dolby.io platform. Our Real-time Streaming SDKs for JavaScript, Native and the CLI will further enable generative content that can be linked to dynamic media sources and streamed instantly into the game-engine. While this audio workflow design pattern is new, I truly believe real-time delivery will revolutionize in-game-engine experiences.

For game developers looking for new opportunities, once you uncover the growth within industries such as architecture, automotive, aerospace, digital twins, sports betting, virtual film and tv production, live entertainment, government and industrial design is going you’ll understand why these industries are increasingly utilizing game engine technology for visualization, prototyping, and simulations. Despite recent changes within the game and tech industries, these markets are still experiencing steady growth as companies recognize the benefits of using real- time rendering and interactive experiences.

Shifting your Unity game development to focus on building in-game-engine experiences with rich and immersive audio based on real-time streaming technlogies will level up your developer game significantly.

Let’s delve into some of the exciting use cases enabled by our plugin:

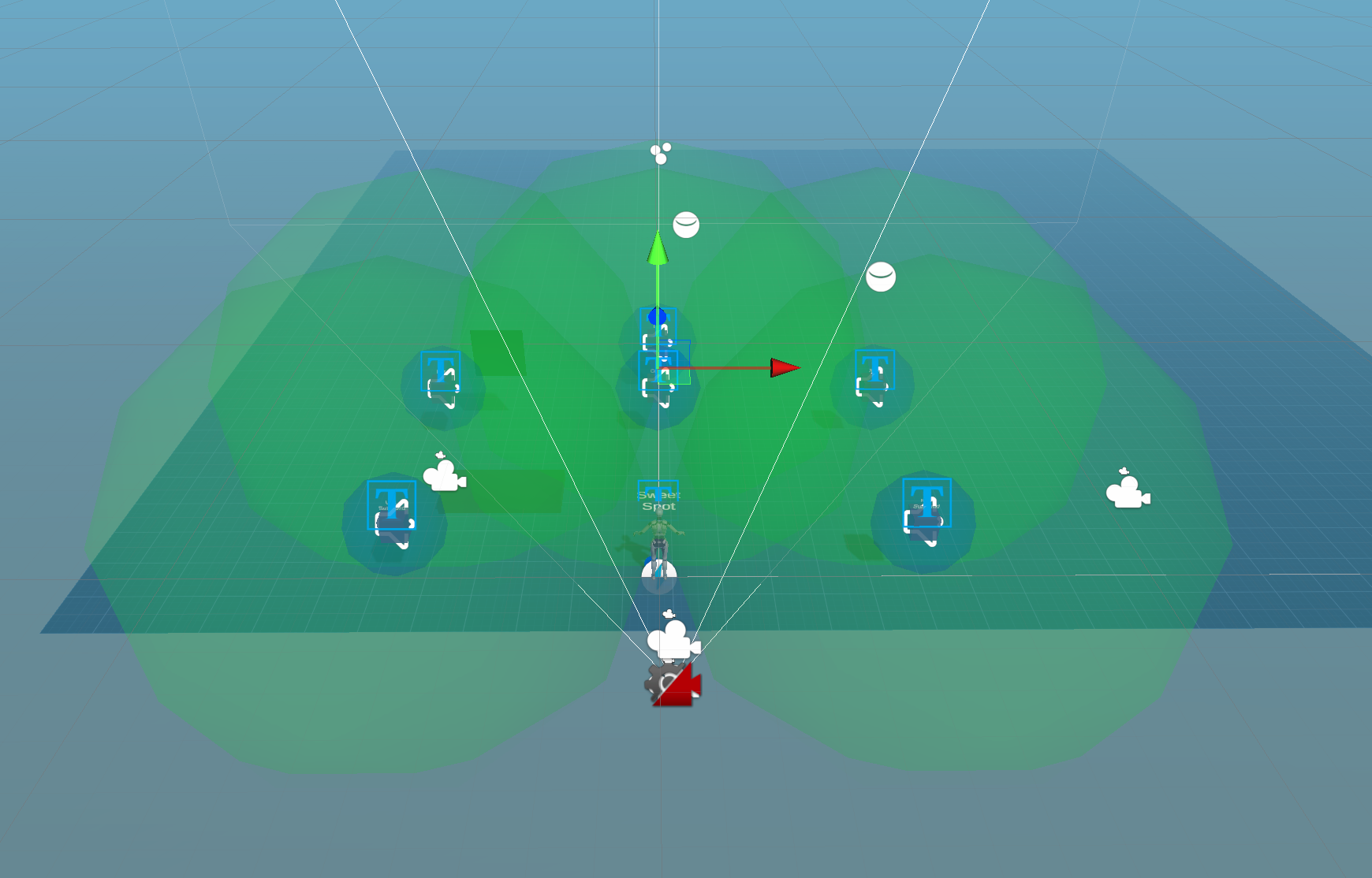

Use Case: Full Audio Immersion

Imagine players being completely immersed in the game’s environment, feeling as if they are sitting in the sweet spot of a surround sound system. With the RTS Plugin for Unity, developers can achieve this by pinning the sound layout pattern to the game object representing the avatar’s head. As the avatar moves within the scene, the audio sources remain fixed in position, enveloping the listener and delivering a truly captivating multi-channel audio experience. Additionally, developers have full control over volume, spread, and roll-off characteristics, allowing for fine-tuning to match their creative vision.

Use Case: 3D Game Object Positioned Sound

In scenarios where developers aim to emulate realistic audio experiences, such as digital twin simulations of home entertainment rooms, the RTS Plugin excels. By utilizing the 5.1 Virtual Speakers, each channel and audio source can be individually positioned and pinned to game objects within the scene. As the avatar moves around, players experience changes in audio intensity based on their proximity to the virtual speaker objects. This capability adds a new level of immersion and spatial awareness to the game world.

We understand that developers may have specific audio needs and often work with third-party plugins. That’s why our plugin seamlessly integrates with Unity’s core audio source, ensuring compatibility with a wide range of existing and future audio extensions.

Our subscriber component handles the ingestion and decoding of incoming 5.1 audio streams, seamlessly delivering them to each audio source. The game engine takes care of rendering the sound from the listener, expertly mixing the game engine audio with audio emitted from individual sources. This guarantees a flawless and cohesive audio experience for players, whether they are using headphones or speakers.

Experience Real-time Streaming for Unity

The RTS Plugin for Unity opens up a world of possibilities for game developers, enabling them to create captivating audio experiences that enhance immersion and engagement. We are excited to see how this plugin will empower developers to take their games to the next level, and we look forward to witnessing the incredible audio journeys they will create.

Experience the future of game audio with the RTS Plugin for Unity. Download the plugin directly from our GitHub account, and don’t forget to checkout the getting started docs; we have easy to follow examples that can get your project streaming in just a few minutes. Add a few stars on our GitHub repo if you like the plugin.